Read next

CATDOLL 136CM Jing

Height: 136cm Weight: 23.3kg Shoulder Width: 31cm Bust/Waist/Hip: 60/54/68cm Oral Depth: 3-5cm Vaginal Depth: 3-15cm An...

Articles

2026-02-22

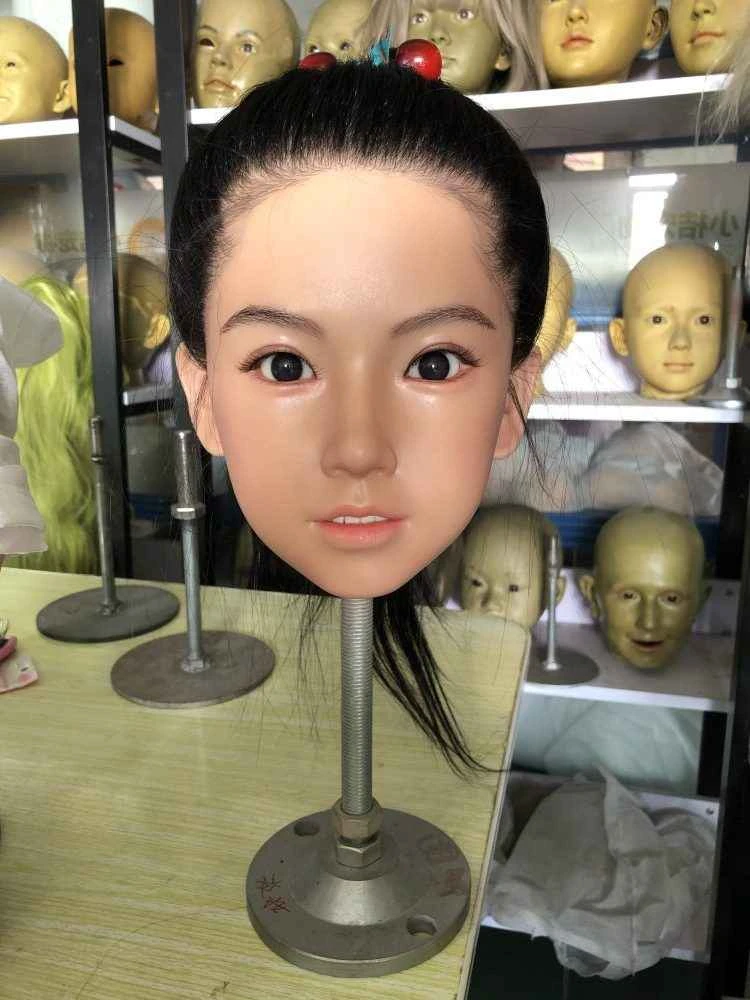

CATDOLL Vivian Hard Silicone Head

Articles

2026-02-22

CATDOLL 126CM Sasha (Customer Photos)

Articles

2026-02-22

CATDOLL 101cm TPE Doll with Anime A-Type Head – Cute Petite Body

Articles

2026-02-22